Automatic Transcription of Tablature from Steel-String Acoustic Guitar Audio

Overview

The guitar is a polyphonic instrument meaning that it can play more than one pitch simultaneously. The piano is also polyphonic, but whereas the piano has exactly one key that produces each pitch, the guitar can produce the same pitch on different strings. Standard music notation with note on a staff shows the pitch and duration of each note which translates to a unique sequence of piano keys. The sequence of string/fret combinations for guitar to play from standard music notation is not unique. Furthermore, although the pitch of a note may be the same on different strings, the quality of the note may be different and the playability of a given set of string/fret combinations in a chord varies greatly. Supplying guitar tablature (guitar tabs) in addition to staff notation can aid in telling the musician not only what note to play but also where to play it.

Transcription of music audio from an isolated monophonic instrument such as the flute is well developed and usually very accurate. Transcription of isolated polyphonic instruments is considerably harder, but at this time is reasonably accurate. Transcription of an ensemble of instruments recorded together is a very difficult problem. So, isolated guitar recording can be fairly well translated to staff notation. This research project attempts to improve the ability to add tablature information to the automatically transcribed staff notation.

We choose to study steel-string guitars (rather than nylon-string guitars) because of the relative ease of obtaining information about individual strings using a set of six individual magnetic pickup coils. We study acoustic guitars because it is much more common to play them with little or no effects processing (such as reverb or distortion) than electric guitars. However, a major difficulty is that the timbre of acoustic guitars varies greatly due to differences in woods, shapes, sizes, and string weight among other variables. This leads to difficulty in separating the base pitch of a plucked note from its harmonics. Although we aim to generate a lot of audio data labelled with ground truth individual string information, the goal is to use this data to train models that can create tab using only the overall audio signal from a single microphone or pickup (un-labelled data).

Although a given pitch can usually be played on two or three different guitar strings, a given combination of pitches is often more limited due to the fact that only one pitch may be played on any given string at one time. Also, given a particular combination of string tuning and capo placement, some combinations of strings/frets are reasonably playable by the average human and others are not. We refer to these as playability constraints and intend to incorporate them in our algorithms. As an initial starting point we will assume that the guitar is in standard tuning (EADGBE from the lowest pitched E2 string to the highest pitched E4 string). Also, we will initially assume no capo, but hope to remove this assumption quickly.

The guitar is a polyphonic instrument meaning that it can play more than one pitch simultaneously. The piano is also polyphonic, but whereas the piano has exactly one key that produces each pitch, the guitar can produce the same pitch on different strings. Standard music notation with note on a staff shows the pitch and duration of each note which translates to a unique sequence of piano keys. The sequence of string/fret combinations for guitar to play from standard music notation is not unique. Furthermore, although the pitch of a note may be the same on different strings, the quality of the note may be different and the playability of a given set of string/fret combinations in a chord varies greatly. Supplying guitar tablature (guitar tabs) in addition to staff notation can aid in telling the musician not only what note to play but also where to play it.

Transcription of music audio from an isolated monophonic instrument such as the flute is well developed and usually very accurate. Transcription of isolated polyphonic instruments is considerably harder, but at this time is reasonably accurate. Transcription of an ensemble of instruments recorded together is a very difficult problem. So, isolated guitar recording can be fairly well translated to staff notation. This research project attempts to improve the ability to add tablature information to the automatically transcribed staff notation.

We choose to study steel-string guitars (rather than nylon-string guitars) because of the relative ease of obtaining information about individual strings using a set of six individual magnetic pickup coils. We study acoustic guitars because it is much more common to play them with little or no effects processing (such as reverb or distortion) than electric guitars. However, a major difficulty is that the timbre of acoustic guitars varies greatly due to differences in woods, shapes, sizes, and string weight among other variables. This leads to difficulty in separating the base pitch of a plucked note from its harmonics. Although we aim to generate a lot of audio data labelled with ground truth individual string information, the goal is to use this data to train models that can create tab using only the overall audio signal from a single microphone or pickup (un-labelled data).

Although a given pitch can usually be played on two or three different guitar strings, a given combination of pitches is often more limited due to the fact that only one pitch may be played on any given string at one time. Also, given a particular combination of string tuning and capo placement, some combinations of strings/frets are reasonably playable by the average human and others are not. We refer to these as playability constraints and intend to incorporate them in our algorithms. As an initial starting point we will assume that the guitar is in standard tuning (EADGBE from the lowest pitched E2 string to the highest pitched E4 string). Also, we will initially assume no capo, but hope to remove this assumption quickly.

Methods

Step one: Build a database of guitar audio recordings labelled with ground truth tablature.

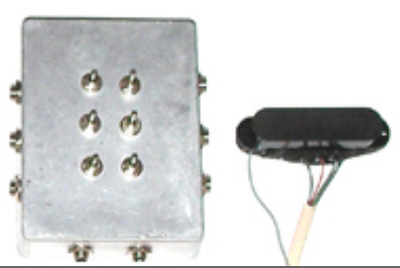

We will be recording a number of different acoustic guitars and players as well as different playing styles. Even if a player tries to play from a specified tablature, what is actually played may not match what was intended. So, we will observe the actual vibrations of the individual strings to produce labelled data. We intend to record audio from a stand-mounted microphone and (when possible) the guitar's standard pickup output. We have a pickup that can be mounted in a guitar tone whole that has six separate coil outputs (one per string). There is some bleed between strings and coils of adjacent coils, but 6-10dB of adjacent-string rejection seems obtainable. We have a multi-track recording system that can handle eight simultaneous channels of audio which allows for recording of microphone, standard pickup, and six individual string coils. When the guitar has a standard pickup we may make some microphone recordings that includes the player singing as well. This would be useful for future work where the guitar audio is contaminated with vocals (a more difficult problem).

We also have a guitar MIDI controller that can send individual-string note onset times and pitches. We will attempt to record and align this MIDI data with the 8-channel audio data. We have several commercial audio-to-MIDI conversion software packages. We shall attempt to create an automated procedure to fuse the output of eight channels of audio-to-MIDI converted audio with six channels of direct MIDI data to create our best estimate of the ground truth string strike labels. This information should include string number, pitch, time of strike, and intensity (MIDI velocity). It is hoped to supply this labelling in the form of MIDI files aligned with the audio and as staff plus tablature PDF files for visualization purposes.

Step two: Use the labelled audio recordings to train, test, and validate models capable generating tablature from unlabelled audio recordings.

We will examine a number of modeling methods to estimate tablature from audio data. These methods will come from both machine learning and traditional modeling. The use of Deep Learning via Tensorflow will be one of these modeling methods.

Step one: Build a database of guitar audio recordings labelled with ground truth tablature.

We will be recording a number of different acoustic guitars and players as well as different playing styles. Even if a player tries to play from a specified tablature, what is actually played may not match what was intended. So, we will observe the actual vibrations of the individual strings to produce labelled data. We intend to record audio from a stand-mounted microphone and (when possible) the guitar's standard pickup output. We have a pickup that can be mounted in a guitar tone whole that has six separate coil outputs (one per string). There is some bleed between strings and coils of adjacent coils, but 6-10dB of adjacent-string rejection seems obtainable. We have a multi-track recording system that can handle eight simultaneous channels of audio which allows for recording of microphone, standard pickup, and six individual string coils. When the guitar has a standard pickup we may make some microphone recordings that includes the player singing as well. This would be useful for future work where the guitar audio is contaminated with vocals (a more difficult problem).

We also have a guitar MIDI controller that can send individual-string note onset times and pitches. We will attempt to record and align this MIDI data with the 8-channel audio data. We have several commercial audio-to-MIDI conversion software packages. We shall attempt to create an automated procedure to fuse the output of eight channels of audio-to-MIDI converted audio with six channels of direct MIDI data to create our best estimate of the ground truth string strike labels. This information should include string number, pitch, time of strike, and intensity (MIDI velocity). It is hoped to supply this labelling in the form of MIDI files aligned with the audio and as staff plus tablature PDF files for visualization purposes.

Step two: Use the labelled audio recordings to train, test, and validate models capable generating tablature from unlabelled audio recordings.

We will examine a number of modeling methods to estimate tablature from audio data. These methods will come from both machine learning and traditional modeling. The use of Deep Learning via Tensorflow will be one of these modeling methods.

|

Equipment

Individual-String Audio Pickup Ubertar Hexaphonic Guitar Pickup Individual-String MIDI Controller Fishman TriplePlay Wireless Guitar Controller (user guide) (tutorials) Eight Channel Audio Recording TASCAM DP-24SD Digital Portastudio (user manual) Microphone Shure SM-58 Guitars Taylor 214ce Fender DG8S |

Software

Python (our preferred programming language)

LibROSA (audio and music processing package in Python)

Tensorflow (deep learning package in Python)

Logic Pro X (digital audio workstation software)

Melodyne (audio analysis software than has automatic music transcription capabilities)

Sibelius (music engraving software)

Python (our preferred programming language)

LibROSA (audio and music processing package in Python)

Tensorflow (deep learning package in Python)

Logic Pro X (digital audio workstation software)

Melodyne (audio analysis software than has automatic music transcription capabilities)

Sibelius (music engraving software)

References